Affiliate links on Android Authority may earn us a commission. Learn more.

Google's latest Tensor processors take an environment-friendly route, but what about the cost?

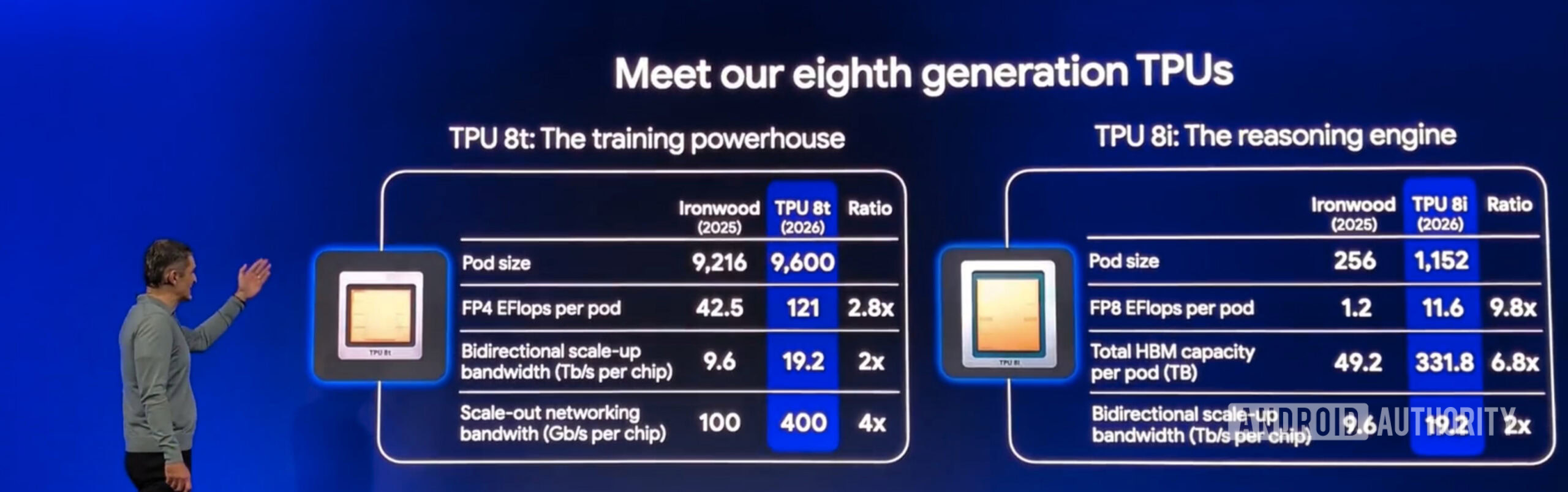

- Google has announced the eighth generation of Tensor Processing Units (TPUs) for its data centers.

- The new class of TPUs is split based on usage, with separate units for training and inference.

- Google says this reduces energy requirements for actual end use, which in turn should benefit the environment.

Last year at its Google Cloud Next event, Google announced the Ironwood class of tensor processing units (TPUs) that power its data centers. These TPUs, designed for the AI era in mind, focused on large-scale inference, or an AI’s ability to draw conclusions or make predictions based on what it has been trained on (basically what chatbots do), but without actually knowing the answer already. This year, it has made further advancements to the TPU hardware and is now splitting compute to serve training and inference differently.

At Cloud Next 2026, Google announced its eighth generation of TPU, with different architectures for different purposes. The newly introduced TPUs include the TPU 8t, which will be used for training AI models, and the TPU 8i, which will be specific to inference-related duties.

Google says the split has been made to address the different power and computing requirements of both processes. The approach will help its data centers reduce energy consumption, thereby reducing operating costs, and lessening AI’s negative impacts on the environment. That means your use of Gemini could soon take up much less water (hopefully!) to keep data centers cooled.

Don’t want to miss the best from Android Authority?

- Set us as a favorite source in Google Discover to never miss our latest exclusive reports, expert analysis, and much more.

- You can also set us as a preferred source in Google Search by clicking the button below.

Training neural networks involves high-bandwidth memory and large clusters of processing units because it requires updating billions of parameters every second. Training involves a process called “backward propagation of errors,” which involves countless feedback loops that test and optimize the neural network on the training set until it starts recalling accurate data. It is basically like testing a person until they tell you the correct answer.

Meanwhile, inference is less intensive and can be processed on less capable hardware with much lower memory consumption. Using the same hardware for training and inference, therefore, results in much higher actual costs, which in turn, increases the effective cost for inference-related tasks.

Google has previously introduced the TPU v5e (where “e” supposedly stands for efficiency) for much smaller-scale operations. The recent TPU 8i appears to be a large-scale adaptation based on the previous hardware. Amazon has also been attempting to achieve the same effect with AWS Inferentia.

While Google has pointed out the environmental benefits of using dedicated reasoning TPUs, we haven’t seen any promises about reducing costs. It remains to be seen whether Google will pass on some of the benefits to its consumers or reserve the profits for itself and its corporate allies.

Thank you for being part of our community. Read our Comment Policy before posting.